Today we can actually buy and deliver systems with 10TB hard disk drives. That is a huge step forward in storage capacity, but to really take advantage of it you need software that addresses the downsides to these large capacities. On the other side of the drive spectrum really fast flash devices that will last at least as long as spinning drives are available and affordable. You need storage software that makes use of that capability too. That software should scale and make it all manageable. Not in the last place: the software should outlive the hardware and take away the headaches of periodic migration.

10TB drives available for real now

The first 10TB hard disk drive was announced about a year ago by HGST. Following a line of large helium-filled hard drives this first 10TB drive was using a special scheme called SMR which slows down writing making it suitable for large archiving systems only. Last December HGST announced that they are now shipping the first 10TB regular drive, the Ultrastar He10. As of now we can actually order this drive from our distributors and we now know what the price is. If you want to buy just one, we will sell it for 950 euro excluding vat and shipping.

Large drives, the next dimension

So now you can choose to use a 10TB drive life will be easy, right? Maybe you could just enlarge your storage capacity by replacing your older, smaller drives by larger 10TB hard drives?

Unfortunately, that is not really the case. While the 10TB He has a specified throughput of 249MB/s, which is a bit more than the 224MB/s of the largest capacity 2.5” 10Krpm drive, it is still not much. Given these numbers it would take 1,800,000/224 = 8035 seconds = a bit over 2 hours to stream the 1.8TB drive and 10,000,000/249 = 40,160 seconds = just over 11 hours for the 10TB drive. The time it takes to fill up the tank, or empty it, is where the problem arises.

Of course, if you don’t need any performance, there is no problem, right?

The short answer to that is: it depends. It all boils down to this: how fast and often do you need the data that has been stored and how acceptable is data-loss over time?

If your data is not valuable (you have copies of it elsewhere) and you don’t need performance, you just need it to reside somewhere, you are fine. For example the secondary replica off-site that can be used for indexing or slow read-access, which beats the speed of a long-distance network connection. You can store your data on a few of these 10TB drives which could be very effective.

If your data is not valuable (you have copies of it elsewhere) and you don’t need performance, you just need it to reside somewhere, you are fine.

If you need any kind of redundancy, continuity or availability of your data, you are in for an interesting challenge. In other words: if you would like to actually use them you need something new on the software part.

It might look attractive to build your storage solution out of a smaller number of these very large drives. In fact: it really is attractive to do so! The big problem lies in the ‘what if a drive fails’ scenario. Of course this is a very old problem, but existing solutions for this problem do not work particularly well with these large data tanks.

Solutions for failing drives

In times not so long ago the solution for failing drives was to connect more of them to a shared controller and define them as a RAID-array. Let’s consider the simplest scheme that might protect you against failing drives: RAID 1, commonly known as the two-way-mirror. In a typical RAID 1 set you put two drives next to each other and you have the controller keep them identical. This way, when one drive fails you can replace the failed drive, the controller will make the new drive identical to the first one again by copying the whole contents of the surviving drives to the new drive. While this process, which is called rebuild, is running performance is less than you would get from the set when both disks are OK.

Now the problem with using two 10TB drives in such a setup is that you expect the surviving drive to keep running until the rebuild is complete. If we could rebuild at the maximum specified transfer rate of the drive (which we can’t since that would mean you could no longer use that drive during the rebuild process) would take at least 11 hours. In practice rebuilding a mirror of two 10TB drives could well take days, weeks or even months depending on the usage of the array. Chances are near to 100% that the two drives have been built in the same factory on the same production line on the same day and where shipped together and stored together before being put into the system. You don’t have to be a scientist to see how it is logical that the chance these drives would fail in roughly the same time. If you need months to rebuild the set chance on loss of data becomes unacceptably high.

The example of RAID 1 is quite good to grasp, but RAID arrays are usually build out of more than 2 drives. When using RAID 5, rebuild times will be even longer. When using RAID 1+0, 0+1 or 5+0 things get even worse: losing two drives might result in the loss of data on tens of drives.

Of course salvaging the data using services of a business like Kroll Ontrack is always a possibility, but it might take months to recover your data, during which it is not available. By the time you might get your data back you could well have gone bankrupt.

Of course you could use 3-way mirrors or RAID6 but the problem remains the same: the time it takes to mitigate the failure is so long the chance of data-loss is just unacceptable. Besides that, if you start using more drives for redundancy you might as well use smaller drives.

Now one of the assumed truths here is that quite a bit of performance is being drawn from the drives in normal operation. That is not always the case.

The use of flash based devices

Flash based devices for use in enterprise storage systems have come a long way since the first enterprise SSD, the STec ZeusIOPS, became generally available to the market back in 2009. With enterprise NVMe devices of 3.2TB in capacity doing 140,000 write IOPS at 1600MB/s throughput while doing a guaranteed 3 Drive Writes per day over a 5 year period storage speed bottlenecks can be solved. But price wise there is a big difference. If you want to buy just one, we will sell you the 3.2TB Ultrastar SN100 for 9,000 euro excluding vat and shipping.

If you compare the 10TB He drive at 0.095 eurocent per GB to the NVMe drive at 2.813 eurocent per GB that is almost 30 times as expensive. SAS based flash devices like the SSD1600MR are roughly the same price per GB at higher capacities like 1,600GB and 1,920GB although their performance is a bit less at 30.000 write IOPS at 700MB/s.

With enterprise SAS SSDs of nearly 2TB available the choice for building storage systems that do not need to scale to more than 100TB is an easy one. As long as the overhead of adding extra rack-units of equipment to house the drives is small it makes sense to use flash only. Put two of those 1,920GB drives in a mirror and when one fails the rebuild time of the entire drive will be about 45 minutes and maybe 2 hours under load.

Combining flash and large capacity drives

There is are a lot of good reasons to combine flash devices with large capacity hard drives in to one storage system. If you leverage the speed of a small amount of flash devices like the Ultrastar SN100 or the SSD1600MR with the density of high capacity drives you can get best of both worlds.

There are quite a few ways to accomplish this and they all involve storage software.

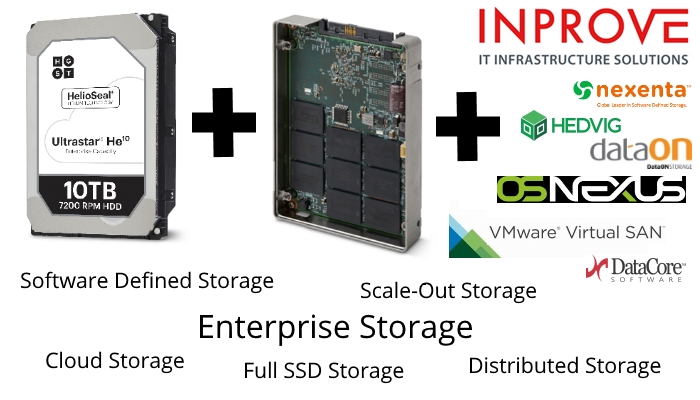

Software-defined Storage or SDS

Software-defined Storage or SDS is the term that has been used for the last 5 years to signify systems that could present storage independent of the underlying hardware. That means you can buy hardware from one or more vendors and software from another vendor. This gives you flexibility and independence in your infrastructure and that freedom will have financial benefits both in short term as in long term.

The type of software to choose depends on the use type, scale, required performance and size of the storage system. I will discuss various software options we provide in my next technical posts.